Misinformation market: The money-making tools Facebook hands to Covid cranks

The video opens with a question: “Could this be bio-terrorism?”

Talking directly into the camera, the unshaven young man continues in a sarcastic American drawl: “I’m sure that there’s no possible way that somebody could play Frankenstein and create the monster that they didn’t mean to, or in many cases, did mean to.”

He moves on to reports in late 2019 that “thousands of CEOs have been stepping down over the last year… Did these CEOs have insight that the global economy was going to tank and something was going on? … It seems like they had a heads up … I think they had a heads up on the economic collapse and they got out of the way.”

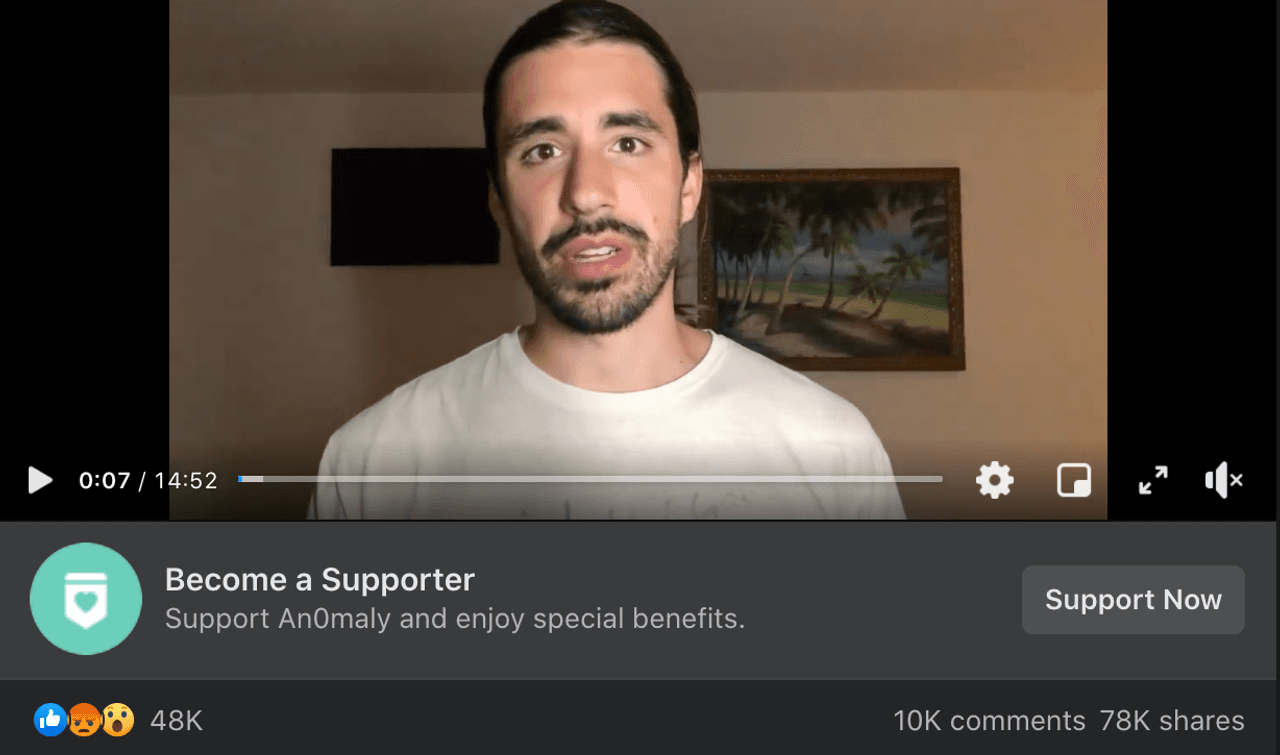

The man is An0maly, a “news analyst & hip-hop artist” with more than 1.5m Facebook followers. The video from March 2020, the early days of the coronavirus pandemic, is one of at least three videos and posts on An0maly’s page that Facebook’s fact-checkers have flagged for containing false or partly false information about the pandemic. Yet even today a strap appears under the videos inviting viewers to pay to “Become a supporter” and “Support An0maly and enjoy special benefits”.

An0maly, real name AJ Feleski, runs one of 430 Facebook pages – followed by 45 million people – identified by the Bureau of Investigative Journalism as directly using Facebook’s tools to raise money while spreading conspiracy theories or outright misinformation about the pandemic and vaccines.

The Bureau’s findings, which likely represent a small snapshot of the vast amount of monetised misinformation on Facebook, show how the site enables creators to profit from spreading potentially dangerous false theories to millions. Some of the posts seen by the Bureau could harm take up of coronavirus vaccines or lead to users believing the pandemic is a hoax. While Facebook generally does not take a cut of this income – although there are times when it does – it benefits from users engaging with this content and staying on its services, exposing them to more adverts.

Facebook’s policies for creators using monetisation tools include rules against misinformation, especially medical misinformation. In November, Facebook, along with Google and Twitter, agreed a joint statement with the UK government committing to “the principle that no user or company should directly profit from Covid-19 vaccine mis/disinformation. This removes an incentive for this type of content to be promoted, produced and be circulated.”

The Bureau's findings suggest Facebook has failed to adequately implement this agreement and appropriately enforce its own policies. Separately, some parts of the business have seemingly been left without proper oversight of misinformation.

The methods of monetisation vary from page to page – more than two dozen, including An0maly’s, use Facebook-designed “creator” or “fundraiser” tools to receive income directly from their Facebook audience. Hundreds more use the social network’s shopping facilities to sell everything from t-shirts to tarot readings.

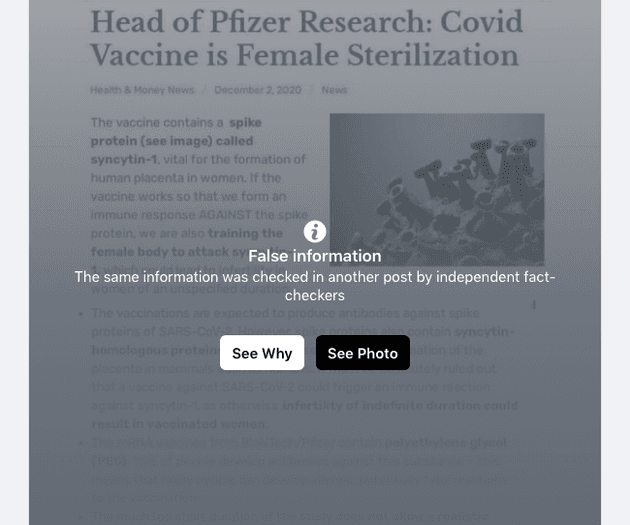

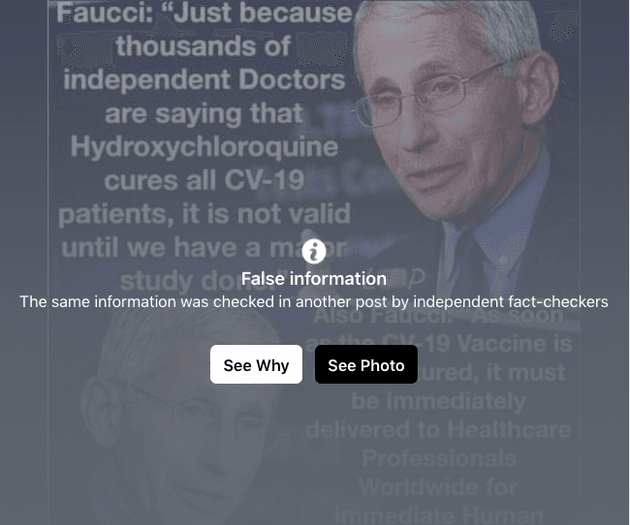

More than 260 of the pages identified by the Bureau have posted misinformation about the coronavirus vaccine. The remainder include false information about the pandemic, vaccines more broadly, or some combination of the two. More than twenty of the pages identified by the Bureau have even been “verified” by Facebook, gaining a blue tick signaling authenticity.

Organisations including the UN, the WHO and Unesco said in September that online misinformation “continues to undermine the global response and jeopardizes measures to control the pandemic”. Vaccine hesitancy is seen as a significant threat to efforts to return to some semblance of normality, with misinformation on social media cited as a key driver of anti-vaccine sentiment.

Facebook told the Bureau: “Pages which repeatedly violate our community standards – including those which spread misinformation about Covid-19 and vaccines – are prohibited from monetising on our platform. We are reviewing the pages shared with us and will take action against any that break our rules.”

The company has already closed down a small number of pages as a result of that review and said it had removed 12m pieces of Covid-19 misinformation between March and October, and placed fact-check warning labels on 167m other pieces of content.

Wellness gone viral

Veganize, a Portuguese-language page based in Brazil, appears to be aimed at those practising a plant-based diet with more than 129,000 followers. The page offers paid supporter subscriptions in exchange for a supporter badge.

Its pinned post, dated August 30 2020, asks: “If you want the red pill click the link below and find out how much you’ve been cheated your whole life!” The link directs readers to a collection of files hosted on Google, repeating a broad range of conspiracy theories and misinformation about vaccines and the pandemic, including “Plandemic”, a pair of conspiracy-laden videos that briefly lit up the internet in May and August before social networks made strenuous efforts to remove them.

Misinformation harms us all. It damages every effort to build more cohesive, equitable societies. Our investigations tackle systemic issues like this to get the evidence that can be used to drive change.

If you agree this is important, support the Bureau today and help us do moreMost of the rest of the page’s recent posts feature memes and videos filled with misinformation about the coronavirus, the efficacy of measures such as masks and the safety of vaccines, including a video clip of the notorious conspiracy theorist David Icke.

Health and wellness pages, like Veganize, form a substantial strand of those spreading misinformation, including those also selling products directly through Facebook. Among the more than 110 pages using virtual storefronts, introduced by Facebook in May, were wellness pages denigrating masks and vaccines while selling “immune-boosting” supplements.

One such wellness page is Earthley, which lists almost 100 products on its Facebook page, alongside regular false posts about vaccines, including discredited claims about links to autism.

The brand, based in Columbus, Ohio, also sells cards customers can hand to “friends and family” containing the false claim that aluminium in vaccines is tied to neurological damage, available in packs of 100 for $4.

Rising stars

Facebook itself can profit from the popularity of brands and individuals who make it big through spreading misinformation. It takes a cut of 5-30% on its Stars currency, used by fans to tip creators streaming live video. The Bureau found two pages using Stars: An0maly and Sid Roth’s It’s Supernatural, a religious programme which has blamed the pandemic on abortion and featured guests describing a dream in which God showed them the virus being created in a Chinese lab. Between them, the pages have reached more than 2.6 million people.

Facebook also briefly took up to 30% of fees paid by new supporters from January last year, but reversed this in August.

Groups whose mission involves spreading information flagged by fact checkers as false have also used Facebook to fundraise. The Informed Consent Action Network, a US non-profit, is one of the most well-funded organisations in the US opposing vaccinations. This has not gone unnoticed by the big tech companies; Facebook and YouTube removed pages for Highwire, an online show run by ICAN founder Del Bigtree that made claims repeatedly rated as false by fact checkers, for which ICAN says it is suing the tech companies.

Bigtree disputed the conclusions of the fact checks against ICAN and his show. Speaking to the Bureau, he said he was merely presenting “credible published science” that contradicts the views of media organisations, global health bodies and scientists cited by fact checkers. He admitted that his statements could “absolutely” put people’s lives at risk if they were wrong, but said the same was true of the World Health Organization. “I absolutely accept that people are going to make choices based on the information that they receive,” he added.

Bigtree disputed suggestions that his views represent a minority of scientific opinion, adding: “I don’t care about consensus even if there is one because consensus has never ever led to the evolution of science.”

He said the removal of the Highwire Facebook page had impacted ICAN’s ability to reach its audience and raise money. “We had over 300,000 followers. I’m never sure which one of those donate – to, where or how, but any time your communication space is cut off certainly that can curtail your ability.” He added that the organisation had a budget of about $3m, sourced entirely from donations.

Despite removing the Highwire page, Facebook still allows ICAN to solicit donations and fundraisers from its more than 44,000 followers on a page that has had at least two posts flagged by fact checkers. According to its page, ICAN has raised almost £24,000 since February 2020. Facebook must approve organisations signing up to raise funds and vaccine misinformation is explicitly cited as a reason that fundraising may be removed from an organisation.

The page also directs its followers to its other fundraising channels. A January 17 post that includes a link to a list of five ways to financially support ICAN was shared almost 1,000 times.

Claire Wardle, executive director of First Draft, a non-profit organisation fighting misinformation which contributed to the Bureau’s research, believes that the money-making systems Facebook offers could encourage people to spread misinformation.

“It is human nature. We know one of the motivations is financial,” she said. “They have started to believe these things… but then when you are in that circle, you also realise there is a way to make money, then you realise that the more you get hits the more money you are making … It’s more than the dopamine hit, it’s dopamine plus dollars.”

For Facebook, offering ways to make money generally is probably a route to encouraging people to use its platform rather than its competitors’, Wardle added.

Defund, debunk, delete

Facebook has the tools to regulate brands and pages spreading inaccurate information.

In October, An0maly released a video saying he had been “demonetized” by Facebook, losing his ability to sign up new supporters and accept Stars. His posts were being seen by fewer people. In the video and in response to questions via email he accused Facebook of “lying” about the content he posted.

“Facebook demonetized my page, blocked me from using tools & drastically cut my reach. I have the exact reasons why,” he told the Bureau. “Facebook libeled me (either bad AI scanning the title or just biased human staffer) and refuses to right their wrongs.” He added: “They didn’t even have a legitimate or viable example to share in regards to the demonetization.”

He said that he did not want this article to “smear” him or find an “angle to make me look bad” and tie him in with any “sloppy pages who recklessly just post nonsense. I don’t do that”.

Another page for French “alternative news” site Planetes360, was keen to draw a distinction between its posts and other misinformation when one of its posts questioning the safety of vaccines was deleted. The site’s editor and founder, Mickael Lelievre, told the Bureau: “It was deleted, under the pretext of medical disinformation … The big question is, do we still have the right to ask questions? … Of course, the people who say the vaccine is terrible, it will kill all of humanity, there will be so many dead, the governments want to kill everyone with this vaccine – I would tell them they’re a bit crazy. But this video, the guy was just asking questions.”

The UK government told the Bureau that Facebook needed to do more to tackle misinformation. “Much more must be done and they need to address the issues which have been raised,” a spokeswoman said. She added that forthcoming online safety legislation would fine social media companies that did not take action.

Facebook has repeatedly flagged posts as false on pages using its monetisation tools

Facebook has repeatedly flagged posts as false on pages using its monetisation tools

An0maly’s suspension did not last long. By January, his money-making tools and extended audience reach had been reinstated – meaning, he said, that he was looking forward to getting a couple of hundred thousand views on his videos rather than the 10,000 or 20,000 they received while he was demonetized.

In a video he said: “They gave me back my Stars, which is huge, that was a huge source of revenue. They gave me back my ad revenue, huge, they gave me back fan subscriptions … So I am pretty pumped about that. That’s definitely a good way to start my new year.

“Facebook was huge for me. And more importantly than all of that is the reach.”

Three days later, he released a video suggesting that the strain on hospital capacity caused by the pandemic was exaggerated. The next day he posted about his latest venture, an online shop selling everything from jewellery to food, provided it was US-made.

He did not respond to further requests for comment after his reinstatement.

Earlier in the crisis, he had been keen to give back to his audience. The video in which he wondered if hundreds of CEOs had advance warnings of the pandemic ended on an altruistic note, asking followers who were struggling with money to ask him for help.

“I’ve already helped out multiple families that told me they couldn’t buy groceries or diapers, and I’ll continue to do that,” he said.

“I’ve been successful enough with my videos to make enough to have money for myself and at this point it’s really just numbers on a computer.”

Reporters: Jasper Jackson and Alexandra Heal

Desk editor: James Ball

Investigations editor: Meirion Jones

Production editor: Frankie Goodway

Impact producer: Ben du Preez

Fact checker: Alice Milliken

Illustrator: Isabelle Cardinal

This article is part of our Global Health project, which has a number of funders including the Bill & Melinda Gates Foundation. None of our funders have any influence over the Bureau’s editorial decisions or output.

-

Area:

-

Subject: