Factchecks and fake news – but Facebook still let them post

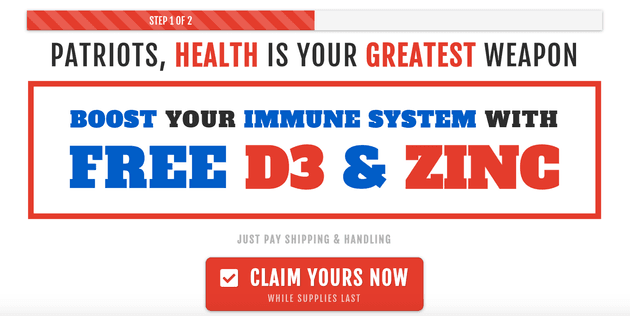

Eric Nepute is a generous man. His website offers “free” zinc and vitamin D supplements, charging only “handling and shipping” at a cost of $9.99.

He is also generous with his time on Facebook, often publishing multiple live videos daily to his 540,000 followers.

“It got released from a lab on purpose, because things like this don’t accidentally happen. This is a biological terrorist weapon on humanity whether you believe it or not,” he says of the coronavirus pandemic in a video from 16 January.

Nepute continues: “There hasn’t been one damn thing else that shown the benefits of preventing Covid-19 except for vitamin D3 … By the way, if you want to know how much D, how much Zinc, how much C, go right now to [website address], somebody type it in below or hit the share button you’ll get a message about it.”

Nepute’s supplement promotion is among the most brazen attempts to convert pandemic misinformation into customers. But unlike the vast majority of the 430 pages identified in a recent Bureau investigation into monetised misinformation on Facebook, Nepute has previously been the subject of not only media coverage, but a warning from the USA’s Federal Trade Commission (FTC).

The fact that he – and another notorious page operator – continued operating on the platform, raise serious questions for Facebook, months after it promised it would take action on coronavirus misinformation and more than a week after it publicly promised to crack down on anti-vaccine material on its networks.

Nepute’s claims in the video, which also include the misleading statement that a Covid-19 vaccine “does not and will not and cannot stop the infection”, have been thoroughly debunked. As far back as April 2020, Nepute was the subject of factchecks over false claims that tonic water could prevent Covid-19 and that death rates were exaggerated.

He was the main case study in a BuzzFeed News article about chiropractors spreading pandemic misinformation. In May, the Federal Trade Commission warned Nepute to stop “unlawfully advertising that certain products or services treat or prevent Coronavirus Disease 2019 (Covid-19)” after a review of his website and Facebook page.

And yet, eight months later, his new video full of false claims has been viewed at least 2.1m times. His other videos, mostly about the coronavirus, were posted more than 4,000 times on a range of public pages and groups in little over a month this year. Most have been accompanied by a button offering the chance to “Learn More” by visiting his website.

Misinformation harms us all. It damages every effort to build more cohesive, equitable societies. Our investigations tackle systemic issues like this to get the evidence that can be used to drive change.

If you agree this is important, support the Bureau today and help us do moreElla Hollowood, a data journalist who worked on a story last year that cited Nepute as a misinformation superspreader in Tortoise, thinks they are not hard to identify. “When you know that there is a misinformation story going on, it's not difficult using keyword searches to isolate and find potential cases of misinformation,” she said. “Surely it must be possible for Facebook to be doing more on this, if it was possible for us to have found out all that information ourselves?”

Nepute’s page was taken down by Facebook within a day of the Bureau drawing its attention to the misinformation in his videos. A spokesperson said: “We have removed the violating content brought to our attention, including Eric Nepute’s Page on Facebook. We remove Covid-19 misinformation that could lead to imminent physical harm, including false claims about vaccines, cures and treatments.”

Nepute charges $9.99 shipping and handling for his "free" vitamin packs

Nepute charges $9.99 shipping and handling for his "free" vitamin packs

If Facebook could, somehow, claim to be unaware of Nepute’s track record until the Bureau brought it to light, it is harder to argue the same of Joseph Mercola.

Long before the pandemic, Mercola was well known for spreading health misinformation, such as claims that spring mattresses amplify harmful radiation. As well as publishing misinformation about vaccines, the multimillionaire has made large donations to anti-vaccine groups, including nearly $3m over the last decade to the National Vaccine Information Center.

He runs one of top ten most popular heath misinformation spreading websites shared on Facebook, according to a report by the campaign group Avaaz in August 2020. He received warning letters over false claims from the Food and Drug Administration in 2005, 2006 and 2011. Last year, the Center for Science in the Public Interest urged the FDA and the FTC to take action against Mercola for false claims about Covid-19 treatments.

And yet, Mercola maintains two huge Facebook pages, one in English with almost 1.8 million followers and a Spanish-language version with more than 1 million, which have been used as channels for misinformation. The latter was among the 430 pages highlighted to Facebook as part of the Bureau’s earlier investigation. The vast majority of these pages reman online.

The English-language page carries a post from August 2019 saying Mercola has “left Facebook”, but just last month it posted an article from his site headlined: “How Covid-19 ‘Vaccines’ May Destroy the Lives of Millions”.

It is a minefield of false claims, including that MRNA vaccines developed by Pfizer and Moderna are “an experimental gene therapy that could prematurely kill large amounts of the population and disable exponentially more”. It also features an interview with Judy Miskovits, whose claims were at the center of the thoroughly debunked conspiracy theory video Plandemic.

While the post on Mercola’s page appears to have been removed, it attracted almost 1,200 shares and more than 1,500 likes while it was up, according to data from the Facebook-owned social media tool CrowdTangle. The article was shared at least 100 further times over the next two days.

Mercola’s article has continued to be shared on pages and public groups since the Bureau brought it to Facebook’s attention more than a week prior to publication. In that time Facebook was able to apply a blanket ban to all content from Australian news publishers in response to incoming legislation forcing it to pay them for their content. A number of community organisations and other pages were also inadvertently caught in the ban.

And despite Mercola’s claim to have left Facebook, he has become more active in the days since Facebook updated its policies on February 8, with half a dozen posts promoting his new book, The Truth about Covid-19.

Mercola still regularly posts to his more than 280,000 followers on Facebook-owned Instagram. There, the same misinformation article about the coronavirus vaccines was posted in late January where it attracted more than 4,000 likes in the first few days.

Mercola's new book on the “truth” about Covid is advertised heavily on his Facebook

Mercola's new book on the “truth” about Covid is advertised heavily on his Facebook

A spokesperson for Mercola disputed that his website published misinformation, adding: “Dr. Mercola has been subjected to harassment from Bill Gates’ front groups like TBIJ for some time, and your direct conflict of interest and self-appointed title of ‘Ministry of Truth’ is ridiculous.”

The work of the Bureau is funded by 20 organisations, including the Bill & Melinda Gates Foundation. The Bureau has never given itself the title “Ministry of Truth”.

Alex Kasprak, a senior writer at the fact-checking site Snopes who covers science, believes Mercola’s influence goes far beyond his owned and operated pages on social media.

“Ultimately if you follow the claims back, it has its origins in a Mercola article,” he said. “The talking point emerges even when he himself does not emerge as the source on Facebook. It's an indication of his power … or it's an indication of Facebook's inability to act in a way that is effective.”

Mercola appears to have done quite well from spreading misinformation. According to a 2017 affidavit cited in The Washington Post, Mercola was worth more than $100m, derived mainly from his network of private companies.

The financial benefits of Nepute’s activities online are less clear. But his success in building an online presence is easy to see. Nepute had just 8,000 followers at the end of February last year. Over the course of April 2020, when his misinformation first caught the attention of fact checkers, he added more than 335,000 followers. Despite the fact checks and FTC warning, he has since added a further 130,000 followers.

His goal, stated on his Facebook page, is to send out one million bottles of “free” vitamins.

Kasprak believes Mercola and Nepute are “absolutely” in the misinformation game to make money, but says they may believe what they say.

“Perhaps with both of them there has to be some sort of self justification if you are going to be a living human being. You have to convince yourself you are actually helping the world.”

Reporter: Jasper Jackson

Desk editor: James Ball

Investigations editor: Meirion Jones

Production editor: Frankie Goodway

Impact producer: Ben du Preez

Fact checker: Alice Milliken

Legal team: Stephen Shotnes (Simons Muirhead Burton)

This article is part of our Global Health project, which has a number of funders including the Bill & Melinda Gates Foundation. None of our funders have any influence over the Bureau’s editorial decisions or output.

Header image: Eric Nepute and Joseph Mercola. Facebook image courtesy of Thought Catalog.

-

Area:

-

Subject: